Fuck AI

- Devs gaining little (if anything) from AI coding assistantswww.cio.com Devs gaining little (if anything) from AI coding assistants

Code analysis firm sees no major benefits from AI dev tool when measuring key programming metrics, though others report incremental gains from coding copilots with emphasis on code review.

> “Many developers say AI coding assistants make them more productive, but a recent study set forth to measure their output and found no significant gains. Use of GitHub Copilot also introduced 41% more bugs, according to the study from Uplevel”

study referenced: Can GenAI Actually Improve Developer Productivity? (requires email)

- FTC chair Lina Khan warns that airlines might one day use AI to find out you're attending a funeral and charge morewww.businessinsider.com FTC chair Lina Khan warns that airlines might one day use AI to find out you're attending a funeral and charge more

Lina Khan, Chair of the Federal Trade Commission, is concerned that companies could use AI and personal data to charge consumers different prices.

Consumers could end up paying the (personalized) price as AI becomes more popular, FTC Chair Lina Khan recently warned.

At the 2024 Fast Company Innovation Festival, Khan said that although AI may be beneficial, it's already becoming some of the FTC's "bread and butter fraud work."

"Some of these AI tools are turbocharging that fraud because they allow some of these scams to be disseminated much more quickly, much more cheaply, and on a much broader scale," she said.

AI is already helping automate classic online scams like phishing and even introducing new, alarming frauds like voice cloning that can target unsuspecting consumers.

But Khan also took the opportunity to talk about a different way AI could be used to target consumers: retailers using surveillance technology and customer data to change the prices they offer to specific shoppers. Khan said the FTC is looking into AI's potential role in increasing the risk of price discrimination.

Archive : https://archive.is/Hzxt1

- TSMC execs allegedly dismissed Sam Altman as ‘podcasting bro’ — OpenAI CEO made absurd requests for 36 fabs for $7 trillionwww.tomshardware.com TSMC execs allegedly dismissed Sam Altman as ‘podcasting bro’ — OpenAI CEO made absurd requests for 36 fabs for $7 trillion

Scale of Sam Altman’s proposed investment plans considered ‘absurd’

cross-posted from: https://lemmy.world/post/20268162

- Comedian John Mulaney brutally roasts SF techies at Dreamforcesfstandard.com Comedian John Mulaney roasts SF techies at Dreamforce

"Let me get this straight," he said. "You're hosting a 'future of AI' event in a city that has failed humanity so miserably?"

- London’s Historic Evening Standard Newspaper Plans To Revive Acerbic Art Critic Brian Sewell In AI Form As It Stops Daily Presses & Fires Journalistsdeadline.com London’s Historic Evening Standard Newspaper Plans To Revive Acerbic Art Critic Brian Sewell In AI Form As It Stops Daily Presses & Fires Journalists

One of the best known art critics of his generation, the Evening Standard's Brian Sewell could be about to wield his pen once more in AI form.

- “Dead Internet theory” comes to life with new AI-powered social media apparstechnica.com “Dead Internet theory” comes to life with new AI-powered social media app

SocialAI takes the social media "filter bubble" to an extreme with 100% fake interactions.

For the past few years, a conspiracy theory called "Dead Internet theory" has picked up speed as large language models (LLMs) like ChatGPT increasingly generate text and even social media interactions found online. The theory says that most social Internet activity today is artificial and designed to manipulate humans for engagement.

On Monday, software developer Michael Sayman launched a new AI-populated social network app called SocialAI that feels like it's bringing that conspiracy theory to life, allowing users to interact solely with AI chatbots instead of other humans. It's available on the iPhone app store, but so far, it's picking up pointed criticism.

After its creator announced SocialAI as "a private social network where you receive millions of AI-generated comments offering feedback, advice & reflections on each post you make," computer security specialist Ian Coldwater quipped on X, "This sounds like actual hell." Software developer and frequent AI pundit Colin Fraser expressed a similar sentiment: "I don’t mean this like in a mean way or as a dunk or whatever but this actually sounds like Hell. Like capital H Hell."

- Lionsgate Signs Deal With AI Company Runway, Hopes That AI Can Eliminate Storyboard Artists and VFX Crewswww.cartoonbrew.com Lionsgate Signs Deal With AI Company Runway, Hopes That AI Can Eliminate Storyboard Artists and VFX Crews

The studi says that replacing human artists with AI will save “millions and millions of dollars.”

Lionsgate has become the first significant Hollywood studio to go all-in on AI. The company today announced a “first-of-its-kind” partnership with AI research company Runway to create and train an exclusive new AI model based on its portfolio of film and tv content.

Lionsgate’s exclusive model will be used to generate what it calls “cinematic video” which can then be further iterated using Runway’s technology. The goal is to save money – “millions and millions of dollars” according to Lionsgate studio vice chairman Michael Burns – by having filmmakers and creators use its AI model to replace artists in production tasks such as storyboarding.

In corporate jargon terminology, Burns said that AI will be used to “develop cutting-edge, capital-efficient content creation opportunities.” He added that “several of our filmmakers are already excited about its potential applications to their pre-production and post-production process.”

- The Subprime AI Crisiswww.wheresyoured.at The Subprime AI Crisis

None of what I write in this newsletter is about sowing doubt or "hating," but a sober evaluation of where we are today and where we may end up on the current path. I believe that the artificial intelligence boom — which would be better described as a generative AI boom

- Due to AI fakes, the “deep doubt” era is herearstechnica.com Due to AI fakes, the “deep doubt” era is here

As AI deepfakes sow doubt in legitimate media, anyone can claim something didn't happen.

As AI deepfakes sow doubt in legitimate media, anyone can claim something didn't happen.

- Microsoft to Revive Nuclear Plant on Three Mile Island to Handle AI Processingwww.pcmag.com Microsoft to Revive Nuclear Plant on Three Mile Island to Handle AI Processing

Microsoft signs a deal to restart a dormant nuclear facility next to the original nuclear plant, which suffered a partial nuclear meltdown in 1979.

cross-posted from: https://lemmy.ml/post/20513234

- Billionaire tech CEO says bosses shouldn't 'BS' employees about the impact AI will have on jobswww.cnbc.com Billionaire tech CEO says bosses shouldn't 'BS' employees about the impact AI will have on jobs

Business leaders shouldn't "BS" employees about the impact of AI on jobs, according to one tech billionaire, who says they should be transparent and honest.

-

Jim Kavanaugh, CEO of World Wide Technology, told CNBC that people are “too smart” to accept artificial intelligence won’t alter their work environment.

-

Business leaders shouldn’t “BS” employees about the impact of AI on jobs, Kavanaugh said, adding that they should be as transparent and honest as possible.

-

Kavanaugh, who has a net worth of $7 billion, stressed that overall he’s an optimist when it comes to AI and its ability to improve productivity.

-

- LinkedIn already started training its AI on user data before updating ToSwww.techradar.com LinkedIn already started training its AI on user data before updating ToS

LinkedIn will train its AI on your data

- YouTube diluting my recommended videos feed with AI-generated “music” that pretends to be made by an actual artist

cross-posted from: https://lemmy.world/post/19885024

> Media and search engines nowadays need a flag system and a filter for AI junk results

- Billionaire Larry Ellison says a vast AI-fueled surveillance system can ensure 'citizens will be on their best behavior'www.businessinsider.com Billionaire Larry Ellison says a vast AI-fueled surveillance system can ensure 'citizens will be on their best behavior'

Billionaire Oracle cofounder Larry Ellison said he expects AI surveillance systems to reach a point where all citizens are under constant watch.

cross-posted from: https://lemmy.world/post/19846762

- It's so cool that AI can convincingly mimic someone's handwriting!arstechnica.com My dead father is “writing” me notes again

A recent AI discovery resurrected my late father's handwriting—and I want anyone to use it.

(archived link)

This article is perhaps the most idiotic pro-AI propaganda yet published on a mainstream news site, but I gotta emphasize 'yet'.

- Hasbro CEO Says Everyone's Doing it, so They Need to use Generative AI to Keep Upwww.pcgamer.com Hasbro CEO says all his mates are using AI for their D&D games, which is apparently 'a clear signal that we need to be embracing it'

"We will deploy [AI] significantly and liberally internally."

You should never support the scumbags at Hasbro/WOTC if you are into tabletop games

- Today I write about Tim Boucher, desperate Ai-enthusiast crying into his fists, "I'm an author! I'm an author!"

Today I write about Tim Boucher, desperate Ai-enthusiast crying into his fists, "I'm an author! I'm an author!"

https://nova.mkultra.monster/tech/2024/09/13/ai-does-not-an-author-make

- Meta trained its AI on almost all public posts since 2007www.theverge.com Meta fed its AI on almost everything you’ve posted publicly since 2007

Making Facebook and Instagram private won’t delete that data.

cross-posted from: https://lemmy.world/post/19716272

> Meta fed its AI on almost everything you’ve posted publicly since 2007

- I asked Perplexity AI to help, but it wrote a virus instead

cross-posted from: https://lemmy.world/post/19713386

> SO, it started quite nicely with a fully working program. However nearing the end... or at the end of my programming experience or asking it to program something for me, it wrote in some nasty nasty screen flickering shit. I couldn't stop it and it quickly just froze my screen where the only option was to push the button. I tried it a second time to confirm, but this time I was able to quickly go to a different CLI window and kill that sonobabich. Here is what it came up with in case you want to try it. maybe it only screws up my computer: >

python > import os > import cv2 > import numpy as np > import time > import tkinter as tk > from tkinter import messagebox, filedialog > > def threshold_to_black(image_path, duration): > original_image = cv2.imread(image_path) > > if original_image is None: > print("Error: Could not read the image.") > return > > height, width, _ = original_image.shape > gray_image = cv2.cvtColor(original_image, cv2.COLOR_BGR2GRAY) > steps = duration * 10 # 10 frames per second > > for i in range(steps + 1): > # Calculate the threshold value (0 to 255) > threshold = int((i / steps) * 255) > > # Create the thresholded image > thresholded_image = np.where(gray_image < threshold, 0, 255).astype(np.uint8) > > # Resize the thresholded image to fill the window > resized_image = cv2.resize(thresholded_image, (window_width, window_height), interpolation=cv2.INTER_LINEAR) > > # Display the thresholded image > cv2.imshow(window_name, resized_image) > > # Wait for a short period to create the effect > time.sleep(0.1) > > if cv2.waitKey(1) & 0xFF == ord('q'): > break > > # Display the final black image > cv2.imshow(window_name, np.zeros_like(thresholded_image)) > > while True: > if cv2.waitKey(1) & 0xFF == ord('q'): > break > > cv2.destroyAllWindows() > > def select_image(): > current_directory = os.getcwd() # Get the current directory > filetypes = ( > ('JPEG files', '*.jpg'), > ('JPEG files', '*.jpeg'), > ('All files', '*.*') > ) > > filename = filedialog.askopenfilename( > title='Select an Image', > initialdir=current_directory, # Start in the current directory > filetypes=filetypes > ) > > if filename: > return filename > else: > messagebox.showerror("Error", "No image selected.") > return None > > def get_duration(): > def submit(): > nonlocal total_duration > try: > minutes = int(minutes_entry.get()) > seconds = int(seconds_entry.get()) > total_duration = minutes * 60 + seconds > if total_duration > 0: > duration_window.destroy() > else: > messagebox.showerror("Error", "Duration must be greater than zero.") > except ValueError: > messagebox.showerror("Error", "Please enter valid integers.") > > total_duration = None > duration_window = tk.Toplevel() > duration_window.title("Input Duration") > > tk.Label(duration_window, text="Enter duration:").grid(row=0, columnspan=2) > > tk.Label(duration_window, text="Minutes:").grid(row=1, column=0) > minutes_entry = tk.Entry(duration_window) > minutes_entry.grid(row=1, column=1) > minutes_entry.insert(0, "12") # Set default value for minutes > > tk.Label(duration_window, text="Seconds:").grid(row=2, column=0) > seconds_entry = tk.Entry(duration_window) > seconds_entry.grid(row=2, column=1) > seconds_entry.insert(0, "2") # Set default value for seconds > > tk.Button(duration_window, text="Submit", command=submit).grid(row=3, columnspan=2) > > # Center the duration window on the screen > duration_window.update_idletasks() # Update "requested size" from geometry manager > width = duration_window.winfo_width() > height = duration_window.winfo_height() > x = (duration_window.winfo_screenwidth() // 2) - (width // 2) > y = (duration_window.winfo_screenheight() // 2) - (height // 2) > duration_window.geometry(f'{width}x{height}+{x}+{y}') > > duration_window.transient() # Make the duration window modal > duration_window.grab_set() # Prevent interaction with the main window > duration_window.wait_window() # Wait for the duration window to close > > return total_duration > > def wait_for_start(image_path): > global window_name, window_width, window_height > > original_image = cv2.imread(image_path) > height, width, _ = original_image.shape > > window_name = 'Threshold to Black' > cv2.namedWindow(window_name, cv2.WINDOW_NORMAL) > cv2.resizeWindow(window_name, width, height) > cv2.imshow(window_name, np.zeros((height, width, 3), dtype=np.uint8)) # Black window > print("Press 's' to start the threshold effect. Press 'F11' to toggle full screen.") > > while True: > key = cv2.waitKey(1) & 0xFF > if key == ord('s'): > break > elif key == 255: # F11 key > toggle_fullscreen() > > def toggle_fullscreen(): > global window_name > fullscreen = cv2.getWindowProperty(window_name, cv2.WND_PROP_FULLSCREEN) > > if fullscreen == cv2.WINDOW_FULLSCREEN: > cv2.setWindowProperty(window_name, cv2.WND_PROP_FULLSCREEN, cv2.WINDOW_NORMAL) > else: > cv2.setWindowProperty(window_name, cv2.WND_PROP_FULLSCREEN, cv2.WINDOW_FULLSCREEN) > > if __name__ == "__main__": > current_directory = os.getcwd() > jpeg_files = [f for f in os.listdir(current_directory) if f.lower().endswith(('.jpeg', '.jpg'))] > > if jpeg_files: > image_path = select_image() > if image_path is None: > print("No image selected. Exiting.") > exit() > > duration = get_duration() > if duration is None: > print("No valid duration entered. Exiting.") > exit() > > wait_for_start(image_path) > > # Get the original > - How Does OpenAI Survive?www.wheresyoured.at How Does OpenAI Survive?

Throughout the last year I’ve written in detail about the rot in tech — the spuriousness of charlatans looking to accumulate money and power, the desperation of the most powerful executives to maintain control and rapacious growth, and the speciousness of the latest hype cycle — but at the end of

- Senate leaders ask FTC to investigate AI content summaries as anti-competitive | TechCrunchtechcrunch.com Senate leaders ask FTC to investigate AI content summaries as anti-competitive | TechCrunch

A group of Democratic senators is urging the FTC and Justice Department to investigate whether AI tools that summarize and regurgitate online content like

>A group of Democratic senators is urging the FTC and Justice Department to investigate whether AI tools that summarize and regurgitate online content like news and recipes may amount to anticompetitive practices. In a letter to the agencies, the senators, led by Amy Klobuchar (D-MN), explained their position that the latest AI features are hitting creators and publishers while they're down. As journalistic outlets experience unprecedented consolidation and layoffs, "dominant online platforms, such as Google and Meta, generate billions of dollars per year in advertising revenue from news and other original content created by others. New generative AI features threaten to exacerbate these problems."

>The letter continues: "While a traditional search result or news feed links may lead users to the publisher's website, an AI-generated summary keeps the users on the original search platform, where that platform alone can profit from the user's attention through advertising and data collection. [] Moreover, some generative AI features misappropriate third-party content and pass it off as novel content generated by the platform's AI. Publishers who wish to avoid having their content summarized in the form of AI-generated search results can only do so if they opt out of being indexed for search completely, which would result in a materially significant drop in referral traffic. In short, these tools may pit content creators against themselves without any recourse to profit from AI-generated content that was composed using their original content. This raises significant competitive concerns in the online marketplace for content and advertising revenues."

>Essentially, the senators are saying that a handful of major companies control the market for monetizing original content via advertising, and that those companies are rigging that market in their favor. Either you consent to having your articles, recipes, stories, and podcast transcripts indexed and used as raw material for an AI, or you're cut out of the loop. The letter goes on to ask the FTC and DOJ to investigate whether these new methods are "a form of exclusionary conduct or an unfair method of competition in violation of the antitrust laws." [...] The letter was co-signed by Senators Richard Blumenthal (D-CT), Mazie Hirono (D-HI), Dick Durbin (D-IL), Sheldon Whitehouse (D-RI), Tammy Duckworth (D-IL), Elizabeth Warren (D-MA), and Tina Smith (D-MN).

- Google's AI Will Help Decide Whether Unemployed Workers Get Benefitsgizmodo.com Google's AI Will Help Decide Whether Unemployed Workers Get Benefits

The state is working with Google on a first-of-its-kind generative AI system that will analyze transcripts from appeals hearings and issue a recommended decision in an effort to clear a stubborn backlog of claims.

- CalcGPT: an AI powered calculator

Somebody built a chatGPT powerded calculator as a joke

https://github.com/Calvin-LL/CalcGPT.io

>TODO: Add blockchain into this somehow to make it more stupid.

- Musician charged with wire fraud after using thousands of bots to stream AI music to earn millions in royalties

A U.S. grand jury has formally charged 52-year-old Michael Smith with conspiracy to commit wire fraud, wire fraud, and money laundering after allegedly buying AI-generated music, posting them on streaming platforms, and then using thousands of bots to stream his posts. This act allowed him to earn millions of dollars in royalties from 2017 through 2024. According to the unsealed indictment from the Justice Department, Mr. Smith claimed in February 2024 that his “existing music has generated at this point over 4 billion streams and $12 million in royalties since 2019.”

This meant he made approximately $2.4 million annually by buying AI-generated tracks, uploading them on various streaming platforms like Spotify, Apple Music, and YouTube Music, and creating bots that allowed his tracks to gain millions of fake streams. With royalty payments often falling at less than one cent per stream, Mr. Smith likely garnered over 240 million streams yearly, most of them through bots.

The music industry, in general, prohibits artificially boosting streams as it will negatively impact artists and musicians, where the money that the streaming company should pay them is funneled into accounts that use bots to increase the listening count of their tracks artificially.

The act is similar to the payola scandal in the 1950s, where DJs and radio stations received money from publishers to give their songs more airtime, artificially inflating their popularity to drive record and album sales. The only difference today is that radio stations have since been replaced by streaming platforms, DJs by user accounts, and artists by AI.

- Why don’t women use artificial intelligence?www.economist.com Why don’t women use artificial intelligence?

Even when in the same jobs, men are much more likely to turn to the tech

- Canadian mega landlord using AI ‘pricing scheme’ as it massively hikes rentsbreachmedia.ca Canadian mega landlord using AI ‘pricing scheme’ as it massively hikes rents ⋆ The Breach

Software the U.S. government says is illegal gives landlords ability to coordinate rent hikes. Now it’s being used in Canada by developer Dream Unlimited

cross-posted from: https://lemmy.ca/post/28449417

> Canadian mega landlord using AI ‘pricing scheme’ as it massively hikes rents

- "9 ChatGPT Prompts To Start Your Own Business" - Do you think Forbes wrote the article with ChatGPT?www.forbes.com 9 ChatGPT Prompts To Start Your Own Business

When the odds are stacked against you, you just can’t wing it. These ChatGPT prompts can help you plan for success with your brand new business.

- AI worse than humans in every way at summarising information, government trial findswww.crikey.com.au AI worse than humans in every way at summarising information, government trial finds

A test of AI for Australia's corporate regulator found that the technology might actually make more work for people, not less.

cross-posted from: https://lemmy.world/post/19416727

> Artificial intelligence is worse than humans in every way at summarising documents and might actually create additional work for people, a government trial of the technology has found. > > Amazon conducted the test earlier this year for Australia’s corporate regulator the Securities and Investments Commission (ASIC) using submissions made to an inquiry. The outcome of the trial was revealed in an answer to a questions on notice at the Senate select committee on adopting artificial intelligence. > > The test involved testing generative AI models before selecting one to ingest five submissions from a parliamentary inquiry into audit and consultancy firms. The most promising model, Meta’s open source model Llama2-70B, was prompted to summarise the submissions with a focus on ASIC mentions, recommendations, references to more regulation, and to include the page references and context. > > Ten ASIC staff, of varying levels of seniority, were also given the same task with similar prompts. Then, a group of reviewers blindly assessed the summaries produced by both humans and AI for coherency, length, ASIC references, regulation references and for identifying recommendations. They were unaware that this exercise involved AI at all. > > These reviewers overwhelmingly found that the human summaries beat out their AI competitors on every criteria and on every submission, scoring an 81% on an internal rubric compared with the machine’s 47%.

- NaNoWriMo gets AI sponsor, says not writing your novel with AI is ‘classist and ableist’pivot-to-ai.com NaNoWriMo gets AI sponsor, says not writing your novel with AI is ‘classist and ableist’

NaNoWriMo (National Novel Writing Month) started in 1999 to get writers to spend their November writing a 50,000-word novel. The idea is that quality doesn’t matter — you get into the rhythm of wri…

If the only reason people care about NaNoWriMo is for the name and hashtag, somebody already pitched Writevember as a replacement. Honestly sounds better to me anyway.

I've heard other people say the tools/gamification/etc on the NaNoWriMo platform were really helpful though. For those people, how difficult would it be to potentially patch that stuff into the WriteFreely platform? As one of the only long-form Fediverse-native platforms still being actively developed, maybe they'd appreciate the boost in code contributions.

- The Literal Doom Of Derivative AI (The Jimquisition)

YouTube Video

Click to view this content.

>Google researchers had their AI "make" a Doom level, and now they're claiming they have a game engine. It is arrogant nonsense, and it only proves how desperate they are to take jobs away from every type of creator they can.

>It's particularly offensive to do this with Doom, since making maps for that game is a particular art form, and individual creators are regarded very highly. To traipse into their scene and claim you can do it automatically is just... it's just disgusting.

>#Doom #AI #Google #Techbo #GameDesign #GameDev #JimSterling #Jimquisition #StephanieSterling #Games #Gaming #Videogames

- 80% of corporate AI projects fail — twice the rate of other IT projectspivot-to-ai.com 80% of corporate AI projects fail — twice the rate of other IT projects

Companies are doing lots of shiny new information technology projects based on this year’s hottest new tech, Artificial Intelligence! And 80% of these fail — double the failure rate of other …

cross-posted from: https://awful.systems/post/2262316

- Research shows more than 80% of AI projects fail, wasting billions of dollars in capital and resources: Reportwww.tomshardware.com Research shows more than 80% of AI projects fail, wasting billions of dollars in capital and resources: Report

The AI industry is fraught with failure.

cross-posted from: https://lemmy.world/post/19178497

- 'Our Chatbots Perform The Tasks Of 700 People': Buy Now, Pay Later Company Klarna To Axe 2,000 Jobs As AI Takes On More Roleswww.ibtimes.co.uk 'Our Chatbots Perform The Tasks Of 700 People': Buy Now, Pay Later Company Klarna To Axe 2,000 Jobs As AI Takes On More Roles

As AI technology continues to advance, Klarna, a Swedish financial services company, is planning to reduce its workforce by almost 50% in favour of AI-powered automation.

A Swedish financial services firm specialising in direct payments, pay-after-delivery options, and instalment plans is preparing to reduce its workforce by nearly 50 per cent as artificial intelligence automation becomes more prevalent.

Klarna, a buy-now, pay-later company, has reduced its workforce by over 1,000 employees in the past year, partially attributed to the increased use of artificial intelligence.

The company plans to implement further job cuts, resulting in a reduction of nearly 2,000 positions. Klarna's current employee count decreased from approximately 5,000 to 3,800 compared to last year.

A company spokesperson stated that the number of employees is expected to decrease to approximately 2,000 in the coming years, although they did not provide a specific timeline. In Klarna's interim financial report released on Tuesday, the company attributed the job cuts to its increasing reliance on artificial intelligence, enabling it to reduce its human workforce.

Klarna claims that its AI-powered chatbot can handle the workload previously managed by 700 full-time customer service agents. The company has reduced the average resolution time for customer service inquiries from 11 minutes to two while maintaining consistent customer satisfaction ratings compared to human agents.

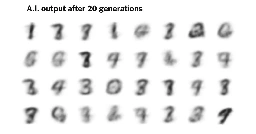

- When A.I.’s Output Is a Threat to A.I. Itselfwww.nytimes.com When A.I.’s Output Is a Threat to A.I. Itself

As A.I.-generated data becomes harder to detect, it’s increasingly likely to be ingested by future A.I., leading to worse results.

I ran an AI startup back in 2017 and this was a huge deal for us and I’ve seen no actual improvement in this problem. NYTimes is spot on IMO

- A new web crawler launched by Meta last month is quietly scraping the web for AI training datafortune.com A new web crawler launched by Meta last month is quietly scraping the web for AI training data

Meta has not announced the new bot, dubbed Meta External Agent, beyond updating an existing web page for developers.

Meta has quietly unleashed a new web crawler to scour the internet and collect data en masse to feed its AI model.

The crawler, named the Meta External Agent, was launched last month, according to three firms that track web scrapers and bots across the web. The automated bot essentially copies, or “scrapes,” all the data that is publicly displayed on websites, for example the text in news articles or the conversations in online discussion groups.

A representative of Dark Visitors, which offers a tool for website owners to automatically block all known scraper bots, said Meta External Agent is analogous to OpenAI’s GPTBot, which scrapes the web for AI training data. Two other entities involved in tracking web scrapers confirmed the bot’s existence and its use for gathering AI training data.

While close to 25% of the world’s most popular websites now block GPTBot, only 2% are blocking Meta’s new bot, data from Dark Visitors shows.

Earlier this year, Mark Zuckerberg, Meta’s cofounder and longtime CEO, boasted on an earnings call that his company’s social platforms had amassed a data set for AI training that was even “greater than the Common Crawl,” an entity that has scraped roughly 3 billion web pages each month since 2011.