morrowind @ morrowind @lemm.ee Posts 10Comments 12Joined 1 mo. ago

Technically it supports fewer languages than whisper, 40 vs 99

The main problem isn't "bother", it's training data. You need hundreds of thousands of hours of high quality transcripts to train models like these and that just doesn't exist for like zulu or whatever

Dolphin: A Large-Scale Automatic Speech Recognition Model for Eastern Languages

I want to clarify something. Reranker is a general term that can refer to any model used for reranking. It is independent of implementation.

What you refer to

because reranker models look at the two pieces of content simultaneously and can be fine tuned to the domain in question. They shouldn't be used for the initial retrieval because the evaluation time is O(n²) as each combination of input

Is a specific implementation known as CrossEncoder that is common for reranking models but not retrieval ones for the reasons you described. But you can also use any other architecture

Thumbnail looks a little odd when small. You may want to go for a more digital llama aesthetic

NotaGen: Advancing Musicality in Symbolic Music Generation with Large Language Model Training Paradigms

autotracers can't generate svgs from text

Claude frequently draws svgs to illustrate things for me (I'm guessing it's in the prompt) but even though it's better at it than all the other models, it still kinda sucks. It's just fudamentally dumb task to do for a purely language model, similar to the arc-agi benchmark , just makes more sense for a vision model and trying to get an llm to do is a waste

what is the license? The link on hf just 404s

EXAONE Deep ━ Setting a New Standard for Reasoning AI - LG AI Research News

Very similar to chain of draft but seems more thorough

Sketch-of-Thought: Efficient LLM Reasoning with Adaptive Cognitive-Inspired Sketching

It matches R1 in the given benchmarks. R1 has 671B params (36 activated) while this only has 32

insane, absolutely insane

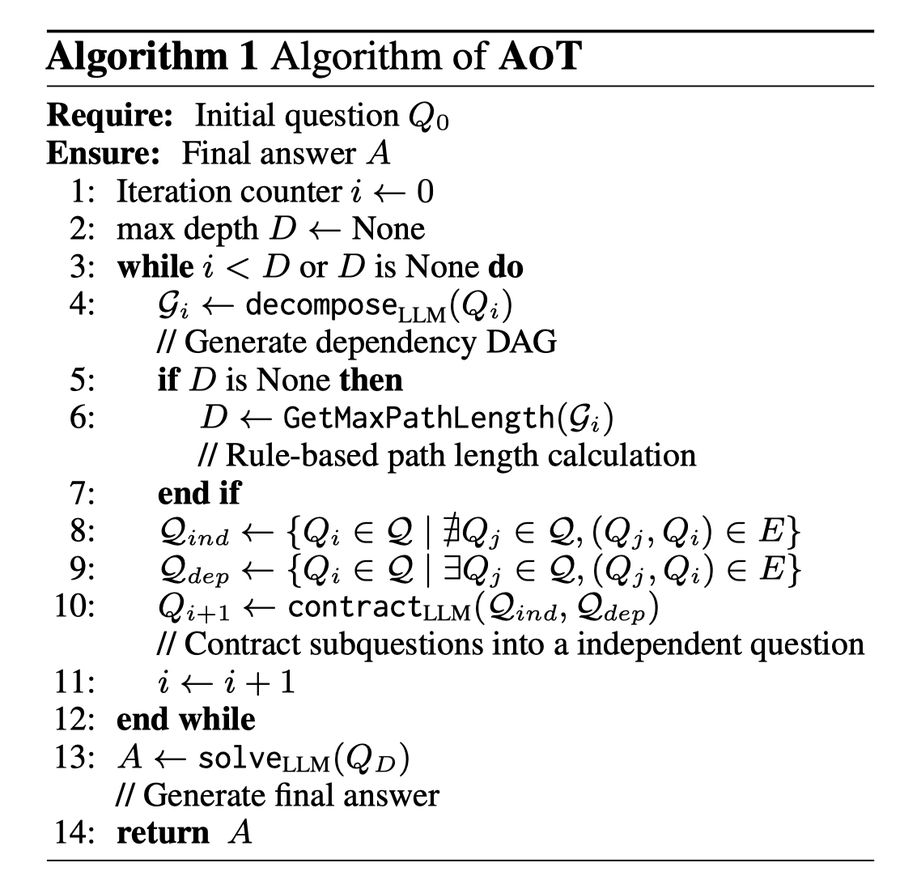

Atom of Thoughts (AOT): lifts gpt-4o-mini to 80.6% F1 on HotpotQA, surpassing o3-mini and DeepSeek-R1

good luck trying to run a video model locally

Unless you have top tier hardware