one more shitty techbrofad down

one more shitty techbrofad down

You're viewing a single thread.

What’s actually going to kill LLMs is when the sweet VC money runs out and the vendors have to start charging what it actually costs to run.

66 3 ReplyYou can run it on your own machine. It won't work on a phone right now, but I guarantee chip manufacturers are working on a custom SOC right now which will be able to run a rudimentary local model.

19 0 ReplyYou can already run 3B llms on cheap phones using MLCChat, it's just hella slow

10 0 ReplyBoth apple and Google have integrated machine learning optimisations, specifically for running ML algorithms, into their processors.

As long as you have something optimized to run the model, it will work fairly well.

They don't want to have independent ML chips, they want it baked into every processor.

7 0 ReplyJokes on them because I can't afford their overpriced phones 😎

5 1 ReplyThat's fine, Qualcomm has followed suit, and Samsung is doing the same.

I'm sure Intel and AMD are not far behind. They may already be doing this, I just haven't kept up on the latest information from them.

Eventually all processors will have it, whether you want it or not.

I'm not saying this is a good thing, I'm saying this as a matter of fact.

2 0 Reply

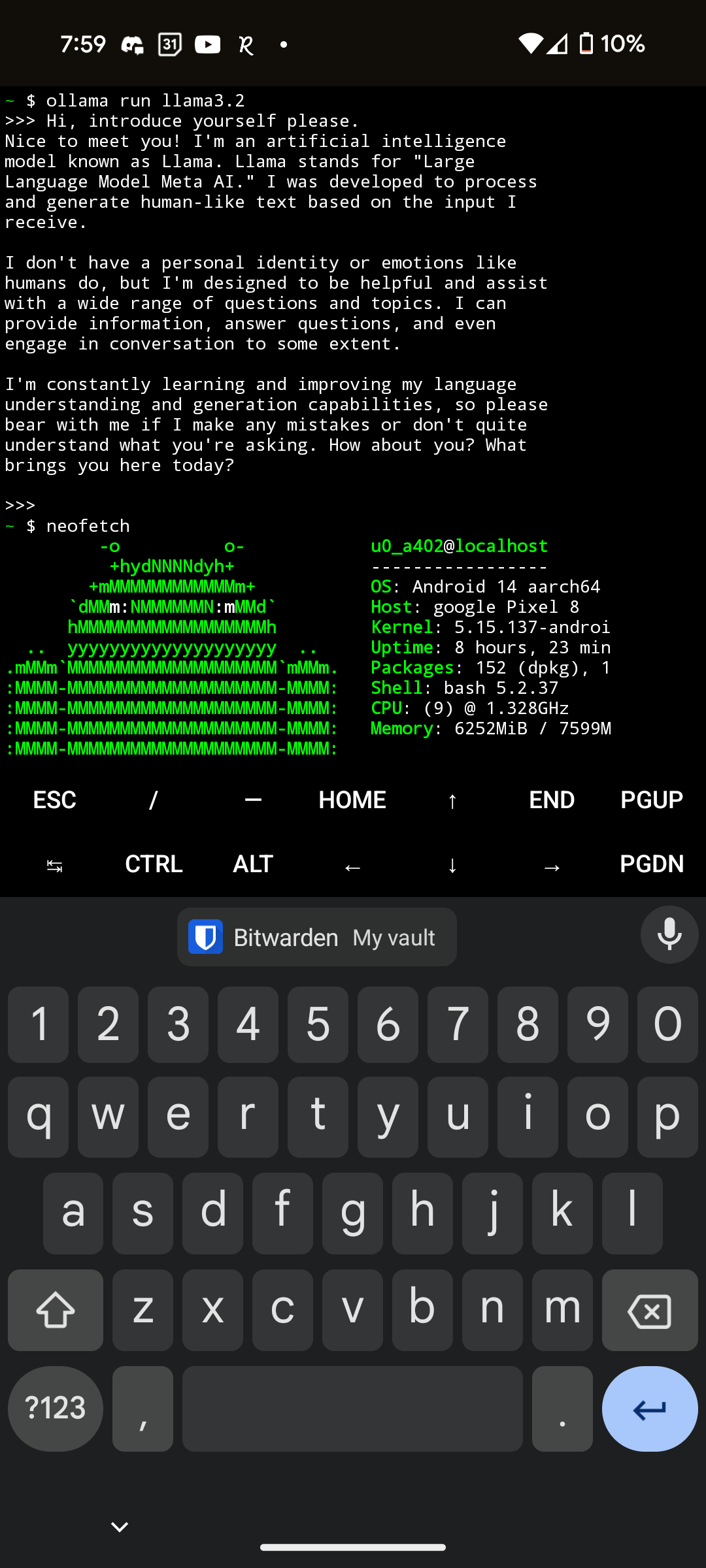

It will run on a phone right now. Llama3.2 on Pixel 8

Only drawback is that it requires a lot of RAM so I needed to close all other applications, but that could be fixed easily on the next phone. Other than that it was quite fast and only took ~3gb of storage!

1 0 Reply

This isn't the case. Midjourney doesn't receive any VC money since it has no investors and this ignores genned imagery made locally off your own rig.

13 1 Replyyeah but that's pretty alright all told, the tech bros do not have the basic competency to do that and they can't sell it to dollar-sign-eyed ceos

2 1 Reply